Hypothesis converging towards a global minimum. Otherwise, in the case of small alpha, our hypothesis would converge slowly and through small baby steps.

If the value of alpha is large, then it will take big steps. In doing so, we have to manually set the value of alpha, and the slope of the hypothesis changes with respect to our alpha’s value. Now, gradient descent will slowly converge our hypothesis towards a global minimum, where the cost would be lowest.

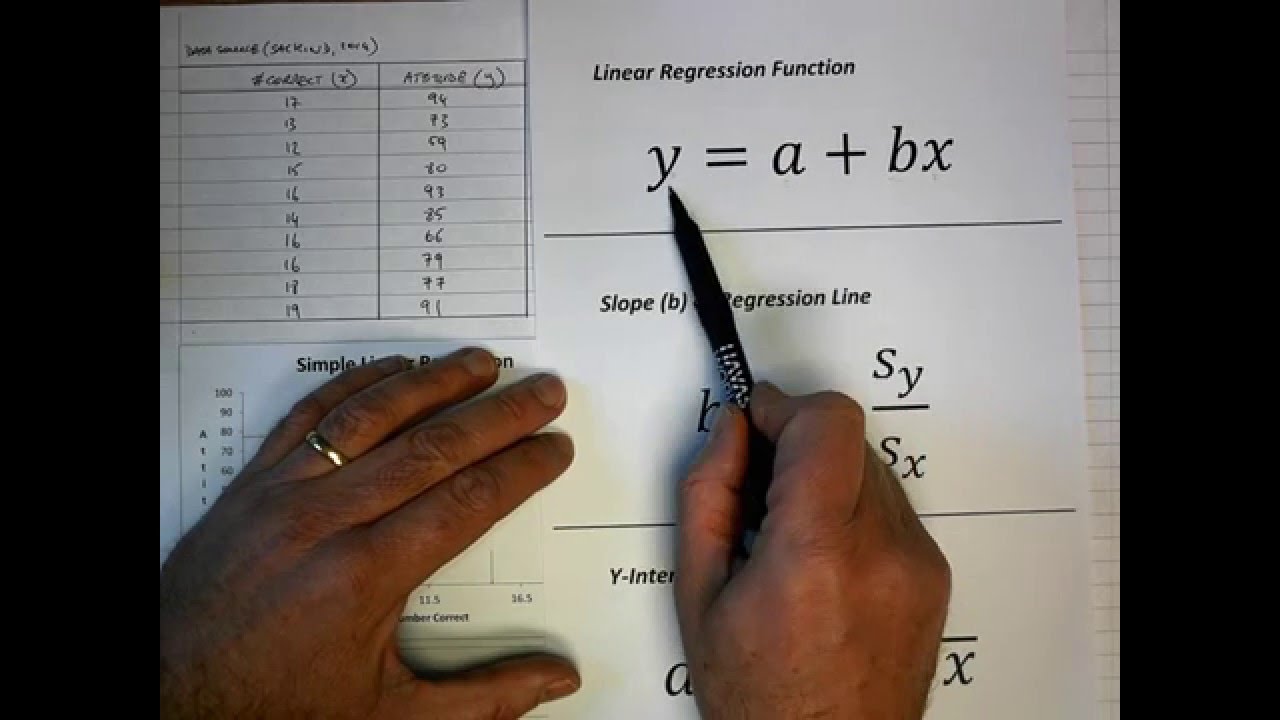

So, in order to minimize that cost (error), we apply gradient descent to it. Now this prediction can be very good, or it can be far away from our ideal prediction (meaning its cost will be high). Now, let's suppose we have our data plotted out in the form of a scatter graph, and when we apply a cost function to it, our model will make a prediction. One of the most common and easiest methods for beginners to solve linear regression problems is gradient descent.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed